- Images

- Blog

- Tools

- Questar

- The Questar telescope

- Questar resource links

- Search for Questar info

- 172mm Focal Reducer

- Afocal adapter for point and shoot camera

- Camera adapter lengths

- Camera adapter threading

- Camera connection

- Camera focusing

- Custom counterweight

- Drift Alignment Joy

- Finder Eyepiece Compatibility

- The Questar Moon 1981

- Questar Powerguide II Battery Life

- Questar Zone, How to Service Videos

- Red Dot finder mount for Questar

- Questar Viewing Table

- Wedge mounts

- White light solar filters comparison

- How to

- Get started in astronomy

- Astro RaspberryPi Camera and kin, the ASIAir and StellarMate

- Blind Smart-phone Equatorial Wedge or GEM Polar Alignment

- Camera phone adapter

- Celestron FirstScope with equatorial tripod mount

- Coat Pocket Astrophotography

- Day-lapse Images of Earthshine on the Crescent Moon

- Dobsonian Carrying Case

- DSO Astrophotography without a Telescope

- DSO imaging without a star tracker

- Estimating image resolution

- Lunar Eclipse Photography

- Moon photography - a dozen ways to shoot the Moon

- Meteor shower photography & planning

- Matching image sensor size to telescope resolution

- Narrow band imaging with color cameras

- Planetary Image Workflow

- Print and Display Astrophotography

- Observing

- Events

- More

- About

- Contact

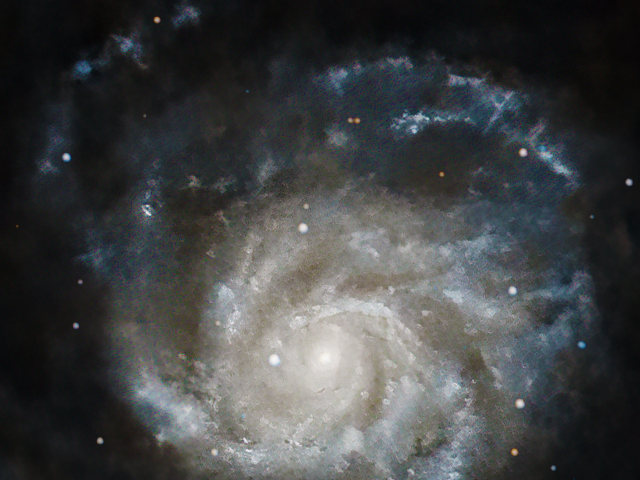

Pinwheel Galaxy, M101

The Pinwheel Galaxy is the 3rd largest galaxy outside the Milky Way visible in the sky. It is a spectacular spiral almost face on 22 million light years away. After my success with upscaled processing of M51, I take a closer look at processing M101 using these technques and compare the results to a Hubble Space Telescope image scaled to the same pixel scale. These images are made with a 2" aperture telescope, and just over 2 hours of data!

The cropped and scalled image above is linked to the full size iamge.

Imaged starting 2022-04-29 07:31 UT. I stacked and processed 45 three minute exposures taken with a William Optics RedCat 250/51mm telescope, Baader UV/IR cut filter, ZWO ASI 533 MC camera, and SkyWatcher AZ-EQ5 mount, ASI EAF and guide camera. All were controlled with a ZWO ASIAir Plus Controller. Processed in PixInsight, StarXTerminator, Topaz DeNoise, and Photoshop.

A 1:1 scale 640x480 crop of this data alternately processed at the original pixel scale, shows softer detail compared to the scaled down crop of the final image above.

A 1:1 scale 640x480 crop of this data alternately processed image at the original pixel scale, then scaled up by 2x after all processing in Photoshop. This scaled image is just too soft with no additional detail revealed.

A 1:1 scale 640x480 crop of this data alternately processed image at the original pixel scale, then scaled up by 2x after all processing in Topaz Megapixel AI. Megapixel AI does a slightly better job scaling up the finished 1x image that the defualt Photoshop algorithm, but still isn't a worthwhile result.

Next we look at a close up of the image produced by moving the upscaling to the beginning of non-linear processing. This is the same process I used in the earlier M51 image, and was used to produce the full size image linked at the top of this page.

A 1:1 scale 640x480 crop of the image processed at 2x image scale. The 2x scaling was done after the stacked image was seperated into star and nebula layers. StarXTerminator was used to separate the star layer from a nebulosity layer. Next a linear MultiScale DeNoise was applied. The results were stretched non-linearly in PixInsight and exported in TIFF format for further processing.

The scaleing of the stretched nebula layer was done using Topz Gigapixel AI. The star layer was scaled using PhotoShop for simplicity. The scaled nebula layer was the processed with Topaz DeNoise AI to sharpen it and reduce noise. The processed nebula and star layer were then recomposited in photoshop. The star layer was exposure stretched to minimize the star size bloat caused by scaling. The nebula layer was processed to enhance detail, color and luminosity contrast, - identically to the processing of the comparison unscaled image.

My golden reference for quality, a 1:1 scale 640x480 crop of a scaled Hubble Space Telescope image at the 2x pixel scale of my image. This image below represents an ideal image for a telescope with a resolution matching mine. Original image Credit NASA, ESA, CXC, SSC, and STScI

The superior image is clearly the scaleed down one from the Hubble. Comparing my images processed with 2 times scaling performed early and late, the image scaled near the start of non-linear shows detail more clearly. Compared to the Hubble image my best image showes three deficiencies:

- Loss of detail compared even to the scaled down Hubble image. This is not surprising comparing an instrument with a 51mm objective aperture to one with 2400 mm! My image was made in less than ideal conditions (high smoke from a fire and average seeing at best), while the Hubble image was made from the perfection of low earth orbit.

- My technique for minimizing star bloat after upscaling, lost dimmer stars. I believe that this result can be improved with better processing technique.

- I only included one-shot-color data in my stack. Shooting additional frames with a narrow band filter would allow enhancement of H alpha and O III regions as seen from the narrow band data in the Hubble iamge.

Can I produce an image as good as the Hubble with a 2" telesocpe? Of course not! The original Hubble image has a pixel scale of 0.267 arcsec/pixel, 14 times the resolution of the 1:1 images matching my pixel scale of 3.76 arcsec/pixel. Can software with machine learning based processing to layer, upscle, sharpen, and denoise images be incorporated into a DSO workflow to produce visibly better images? Yes! I believe that similar benefits are possible for images made with larger aperture scopes.

Content created: 2022-05-14

Comments

![]() Submit comments or questions about this page.

Submit comments or questions about this page.

By submitting a comment, you agree that: it may be included here in whole or part, attributed to you, and its content is subject to the site wide Creative Commons licensing.

Blog

Silver City Heart & Soul Nebulae Revisited

Medulla or Garlic Nebula, CTB1, Abell 85

Nebulae afire off the belt of Orion

City Lights Horsehead & Flame Nebulae

Flaming Star Nebula dark sky vrs city sky face-off

Christmas Tree Cluster and Cone Nebula with more exposure

Christmas Tree Cluster with the Cone Nebula

Horsehead Nebula Face-Off Bortle 2 vrs Bortle 7

California Nebula Face-Off Bortle 2 vrs Bortle 7

Western Veil Nebula from Marfa

Trifid and Lagoon Nebulae Drizzle Stacked

North America and Penguin Nebulae Drizzle Stacked

Return to Coconino Andromeda, M31

Revisiting the Willow House Rosette

Corazón Incendida, the Heart Nebula

Elephant Trunk with the Garnet Star

Balanced HO North America & Pelican Nebulae

The Lagoon & Trifid Nebulas from Marfa

Western Veil Nebula from Marfa

The Great Winter Solstice Conjunction of Jupiter and Saturn

Two days to the Great Jupiter Saturn Conjunction

Worlds Apart, the Jupiter Saturn Conjunction

Raspberry Pi HQ camera first light

Waxing Crescent Moon with earthshine and stars

Vixen Porta II mount adapter or aluminum disk with holes #2

The 2019 ACEAP Expedition to Chile

Universe of Stories: Getting Started in Astronomy

View an Apollo flag on the Moon from Earth?

Apollo 50th is my 24th Flickr Explore Selection

Shooting the video stars - Moon and Jupiter

Ready for a change in perspective

Jupiter and the Galilean Moons through a camera lens

2022 the Solar System in one view

As hard to see as a doughnut on the Moon

Santa Inez miners church Terlingua

Waning gibbous Moon early Christmas Eve

Christmas eve on the eastern limb of the Moon

Mars at 23.3 arc sec with Syrtis Major

BadAstroPhotos Web Site Analytics

Saturn with Pixinsight workflow

Mars Update from Mauri Rosenthal

Waxing Gibbous Moon Terlingua Texas

Io Transit of Jupiter with the Great Red Spot

Not so bad Astro after 2 years

Eyes of the Llama from Urubamba

Moon and Venus over Cusco's El Monasterio

Tiangong-1 Space Station reentry tracking

Apollo - 50 years of human footprints on the Moon, complete!

Waxing Crescent Moon after Astrophotography Meetup

The Great American Eclipse from Above and Below

A million astro photo views on Flickr

Ansel Adams: Moonrise, Hernandez, New Mexico

December Solstice Crescent Moon with Earthshine

January 31 Blue Moon Lunar Eclipse

The Total Solar Eclipse in half a minute

2017 Solar Eclipse from a million miles away

Longhorn Eclipse from a Wyoming Hilltop

Fibs, damn lies, telescopes, and astrophotography

Full Moon before Total Solar Eclipse 2017

Longhorn Crescent Moon from Austin

The Crescent Moon with Jupiter and moons

Eye of the storm 2 - Juno & Jupiter's Great Red Spot

Eye of the storm - Juno & Jupiter's Great Red Spot

A million miles from earth, the Moon and earth east and west

Saturn with Titan, Dione, Tethys, & Rhea

Animated transit of Jupiter by Io

Solar Eclipse 2017 Highway Traffic Map

Mid-South Star Gaze + Questar Meet

Sweet Home Alabama Transit of Jupiter by Io

Update on AutoStakkert on macOS

Diffraction is not the limit for digital images

Teasing life into planetary images

Moon camera comparison: DSLR & planetary cameras

Waning Crescent Moon with Earthshine

1st day of Spring last quarter Moon

Lewis Morris Rutherfurd's Moon

Super Moonrise over Lady Bird Lake

360 Tower pierces the Super Moon

Lisbeth's Birthday Crescent Moon

The Moon and Mars from the Astro Café

Silent and Mechanical Shutter Comparison

Austin's Solar Sidewalk Sun-Day

Another Longhorn Moon over Austin

Jupiter and Venus do a father-daughter dance

Sunset with Mercury, Jupiter, and Venus

Mercury, Jupiter & Venus after sunset

3 months, 92 nations, 3750 visitors, 100,000+ images served

Upcoming Conjunction of Jupiter & Venus

The Perseid Meteor Shower with the Andromeda Galaxy

Waxing crescent Moon from UHD Video

NWS Interactive Digital Forecast Map

M7 the Ptolemy Cluster preview

Five Planets in the Sky at Dusk

Lucky Fat Waning Crescent Moon

Two months, 80 nations, and an embarrassing bug

Saturn with 5 moons: Titan, Rhea, Enceladus, Tethys, & Dione

The nearly full Moon and Saturn with a short tube refractor

2026

2026