- Images

- Blog

- Tools

- Questar

- The Questar telescope

- Questar resource links

- Search for Questar info

- 172mm Focal Reducer

- Afocal adapter for point and shoot camera

- Camera adapter lengths

- Camera adapter threading

- Camera connection

- Camera focusing

- Custom counterweight

- Drift Alignment Joy

- Finder Eyepiece Compatibility

- The Questar Moon 1981

- Questar Powerguide II Battery Life

- Questar Zone, How to Service Videos

- Red Dot finder mount for Questar

- Questar Viewing Table

- Wedge mounts

- White light solar filters comparison

- How to

- Get started in astronomy

- Astro RaspberryPi Camera and kin, the ASIAir and StellarMate

- Blind Smart-phone Equatorial Wedge or GEM Polar Alignment

- Camera phone adapter

- Celestron FirstScope with equatorial tripod mount

- Coat Pocket Astrophotography

- Day-lapse Images of Earthshine on the Crescent Moon

- Dobsonian Carrying Case

- DSO Astrophotography without a Telescope

- DSO imaging without a star tracker

- Estimating image resolution

- Lunar Eclipse Photography

- Moon photography - a dozen ways to shoot the Moon

- Meteor shower photography & planning

- Matching image sensor size to telescope resolution

- Narrow band imaging with color cameras

- Planetary Image Workflow

- Print and Display Astrophotography

- Observing

- Events

- More

- About

- Contact

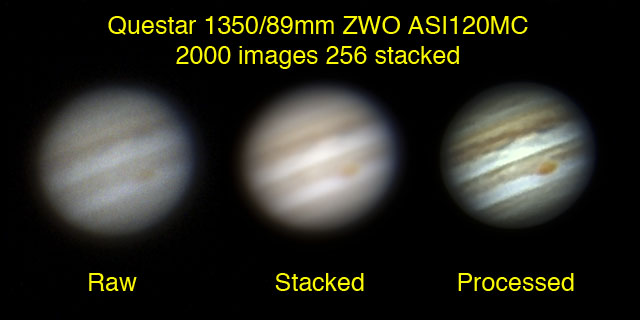

Teasing life into planetary images

At first the raw data from your camera looks like a big noisy ghost of what you saw in the eyepiece. Then slowly you recover the detail that you saw and finally details that eluded your eye. Read on to learn about the life of planetary image data.

Acquisition of raw images

There are three primary goals when you take image data:

- Get your images in focus. Don't make a difficult job impossible. Take the time to get the best focus that you can. The image will jump around on your screen and the best focus will drift. Use focusing aids: magnified views Bahtinov mask, and electronic focus highlighting measures, to make sure that your focus is spot on.

- Don't overexpose. You can recover from some underexposure when you stack your image, but overexposure is irrecoverable. It is as bad as leaving the lens cap on.

- Record as many images as you can as quickly as possible. Each image is a lottery ticket to get a lucky clear image. They need to be images of the same scene before shadows have moved or the planet turned enough to be noticeably.

Grading and stacking

Grading uses tools that calculate a sharpness index and your own eyes to pick the best images. By the luck of the atmospheric seeing lottery, some images will be much clearer than others. Sometimes image defects like a passing plane or a bit of dust on the sensor can fool computer tools; your eyes need to be part of the process. The spread of scores will help you determine how many images to keep. Good seeing conditions may leave you with half your images suitable for stacking. Only a few percent may be useful when the air is turbulent. Remember the garbage in, garbage out rule.

Stacking your good images reduces noise in your final image. Stacking more images improves the signal to noise ration in your stack, but it is a game of diminishing returns. The noise is reduced by the square root of the number of images that you stack. Stacking 9 images will leave 1/3 of the noise. Stacking 100 will leave 1/10. Stacking 10,000 will leave 1% of the noise. Most of the benefit happens quickly and even a few images makes a big difference. This is why grading is so important. Adding bad images into the stack will reduce noise but also reduce contrast and blur your final image.

Processing

This is where you get to use photoshopping for good; to reveal truth rather than obscure it. Photoshop is useful, but often isn't the best tool for a particular job. I often move my data between three or four tools to produce a final image. Whatever tools you choose, there are two main jobs that you will need to accomplish at this step:

- Sharpening can pull details that you didn't realize were hidden in the fog of your image. Only some sharpening algorithms increase resolution and reveal real hidden detail. Other algorithms only fool your eye and brain, but in reality produce an inferior image.

- Light intensity management, useing tools like levels and curves, fits a universe of light onto a screen or paper print. They dynamic range of the human eye can be as much as 1,000,000:1 (20 photographic stops). A perfectly exposed camera image might capture a range of 10,000:1 (~ 12 stops). Your computer screen or print is probably closer to 200:1 (~ 8 stops).

They eye and brain are easy to fool. Good processing lets you see all the detail in the image, in spite of the limitations of the medium. Bad processing creates artifacts in the image or obscures some parts of it in revealing others.

Hints for processing

The easiest way to make an image appear sharper is to add noise to it. The human brain will perceive an image with a little bit of noise added as sharper than the image without the noise. Many image processing programs have an algorithm called unsharp mask. Unsharp mask has origins before digital photography. You take an image, blur it, subtract the blurred image from the original, and add a bit of that back into the original image. This creates artifacts along edges that trick your brain sending it into sharpness happiness, but in reality the image has less detail.

Deconvolution and wavelet algorithms model blurring as the convolution of a point spread function (the effects diffraction, dispersion, and optical imperfections) and mathematically reverse this, revealing the true detail present in the image. These algorithms were used to recover the first useable images from a defective Hubble Space Telescope. Later that was fixed with corrective lenses, but these algorithms remain a powerful tool. Using an approximate point spread function is often good enough to dramatically improve an image.

Resolution recovery sharpening algorithms should be applied only to linear data, before any intensity manipulation is performed on the data.

Intensity masking directs sharpening or level adjustment tools to operate on only the regions of the image where they reveal and not where they obscures image details. It is especially useful for avoiding sharpening artifacts like the light and dark rings (onion skin effect) that sharpening algorithms can create around the edge of a planet.

Using sharpening or light curve intensity tools takes a light touch. There is a soft boundary between revealing the data hidden in the image and making the image look hard or over processed. It is an art that I struggle with for every image that I produce.

I reworked the finished image with a little lighter touch in the enhancement and tried out stacking with Autostakkert 2 virtualized on macOS. The stacking results were similar, but stacking is easier in Autostakkert.

Content created: 2017-04-10 and last modified: 2017-04-12

Comments

![]() Submit comments or questions about this page.

Submit comments or questions about this page.

By submitting a comment, you agree that: it may be included here in whole or part, attributed to you, and its content is subject to the site wide Creative Commons licensing.

Blog

Silver City Heart & Soul Nebulae Revisited

Medulla or Garlic Nebula, CTB1, Abell 85

Nebulae afire off the belt of Orion

City Lights Horsehead & Flame Nebulae

Flaming Star Nebula dark sky vrs city sky face-off

Christmas Tree Cluster and Cone Nebula with more exposure

Christmas Tree Cluster with the Cone Nebula

Horsehead Nebula Face-Off Bortle 2 vrs Bortle 7

California Nebula Face-Off Bortle 2 vrs Bortle 7

Western Veil Nebula from Marfa

Trifid and Lagoon Nebulae Drizzle Stacked

North America and Penguin Nebulae Drizzle Stacked

Return to Coconino Andromeda, M31

Revisiting the Willow House Rosette

Corazón Incendida, the Heart Nebula

Elephant Trunk with the Garnet Star

Balanced HO North America & Pelican Nebulae

The Lagoon & Trifid Nebulas from Marfa

Western Veil Nebula from Marfa

The Great Winter Solstice Conjunction of Jupiter and Saturn

Two days to the Great Jupiter Saturn Conjunction

Worlds Apart, the Jupiter Saturn Conjunction

Raspberry Pi HQ camera first light

Waxing Crescent Moon with earthshine and stars

Vixen Porta II mount adapter or aluminum disk with holes #2

The 2019 ACEAP Expedition to Chile

Universe of Stories: Getting Started in Astronomy

View an Apollo flag on the Moon from Earth?

Apollo 50th is my 24th Flickr Explore Selection

Shooting the video stars - Moon and Jupiter

Ready for a change in perspective

Jupiter and the Galilean Moons through a camera lens

2022 the Solar System in one view

As hard to see as a doughnut on the Moon

Santa Inez miners church Terlingua

Waning gibbous Moon early Christmas Eve

Christmas eve on the eastern limb of the Moon

Mars at 23.3 arc sec with Syrtis Major

BadAstroPhotos Web Site Analytics

Saturn with Pixinsight workflow

Mars Update from Mauri Rosenthal

Waxing Gibbous Moon Terlingua Texas

Io Transit of Jupiter with the Great Red Spot

Not so bad Astro after 2 years

Eyes of the Llama from Urubamba

Moon and Venus over Cusco's El Monasterio

Tiangong-1 Space Station reentry tracking

Apollo - 50 years of human footprints on the Moon, complete!

Waxing Crescent Moon after Astrophotography Meetup

The Great American Eclipse from Above and Below

A million astro photo views on Flickr

Ansel Adams: Moonrise, Hernandez, New Mexico

December Solstice Crescent Moon with Earthshine

January 31 Blue Moon Lunar Eclipse

The Total Solar Eclipse in half a minute

2017 Solar Eclipse from a million miles away

Longhorn Eclipse from a Wyoming Hilltop

Fibs, damn lies, telescopes, and astrophotography

Full Moon before Total Solar Eclipse 2017

Longhorn Crescent Moon from Austin

The Crescent Moon with Jupiter and moons

Eye of the storm 2 - Juno & Jupiter's Great Red Spot

Eye of the storm - Juno & Jupiter's Great Red Spot

A million miles from earth, the Moon and earth east and west

Saturn with Titan, Dione, Tethys, & Rhea

Animated transit of Jupiter by Io

Solar Eclipse 2017 Highway Traffic Map

Mid-South Star Gaze + Questar Meet

Sweet Home Alabama Transit of Jupiter by Io

Update on AutoStakkert on macOS

Diffraction is not the limit for digital images

Teasing life into planetary images

Moon camera comparison: DSLR & planetary cameras

Waning Crescent Moon with Earthshine

1st day of Spring last quarter Moon

Lewis Morris Rutherfurd's Moon

Super Moonrise over Lady Bird Lake

360 Tower pierces the Super Moon

Lisbeth's Birthday Crescent Moon

The Moon and Mars from the Astro Café

Silent and Mechanical Shutter Comparison

Austin's Solar Sidewalk Sun-Day

Another Longhorn Moon over Austin

Jupiter and Venus do a father-daughter dance

Sunset with Mercury, Jupiter, and Venus

Mercury, Jupiter & Venus after sunset

3 months, 92 nations, 3750 visitors, 100,000+ images served

Upcoming Conjunction of Jupiter & Venus

The Perseid Meteor Shower with the Andromeda Galaxy

Waxing crescent Moon from UHD Video

NWS Interactive Digital Forecast Map

M7 the Ptolemy Cluster preview

Five Planets in the Sky at Dusk

Lucky Fat Waning Crescent Moon

Two months, 80 nations, and an embarrassing bug

Saturn with 5 moons: Titan, Rhea, Enceladus, Tethys, & Dione

The nearly full Moon and Saturn with a short tube refractor

2026

2026